Over 70% of RICS-regulated firms now use at least one AI-powered tool in their daily practice — yet fewer than a third had a formal AI governance framework in place before March 2026. That gap is no longer acceptable. The RICS Responsible AI Standards in Building Surveys: March 2026 Implementation Checklist for UK Surveyors represents the profession's most significant compliance shift in years, and every surveyor — regardless of firm size or specialism — is legally bound to meet it.

This article breaks down exactly what the standard requires, what building surveyors must do to comply, and how to embed responsible AI use into everyday practice without disrupting service quality.

Key Takeaways 📋

- Mandatory compliance applies to all RICS members and regulated firms globally — no exemptions for sole traders or small practices [2].

- Firms must complete a written material impact assessment to determine whether AI use affects service delivery [1].

- A formal AI risk register with quarterly reviews is required for any AI use with material impact [4].

- Written client notification before AI is used in service delivery is a non-negotiable requirement [4].

- Professional indemnity insurance policies must be reviewed and updated to cover AI-related risks [5].

Why March 2026 Is a Hard Deadline for UK Building Surveyors

The RICS Responsible Use of Artificial Intelligence in Surveying Practice standard came into effect in September 2025, with a phased implementation window giving firms until March 2026 to achieve full compliance [3]. That grace period has now closed.

This is not guidance. It is not advisory. Compliance is legally binding for all RICS members and regulated firms worldwide, with no exemptions based on firm size, practice area, or geography [2][5]. A sole-practitioner residential surveyor in Wimbledon is held to the same standard as a multinational consultancy.

The standard was developed in response to the rapid proliferation of AI tools across surveying practice — from automated defect detection software and drone-based imaging analysis to AI-assisted report drafting and valuation modelling. RICS recognised that without a clear framework, the profession risked inconsistent quality, client harm, and reputational damage [1].

💡 Key insight: The standard does not ban AI. It mandates that AI is used responsibly, with documented oversight, client transparency, and professional judgement at its core.

For surveyors conducting RICS building surveys, the implications are particularly significant. Building surveys involve high-stakes professional judgements about structural integrity, defects, and property condition — areas where AI errors carry real financial and safety consequences.

The Core RICS Responsible AI Standards in Building Surveys: March 2026 Implementation Checklist for UK Surveyors

The following checklist translates the standard's requirements into practical, actionable steps for building surveyors. Each item corresponds to a mandatory obligation under the RICS framework.

✅ Step 1: Conduct a Written Material Impact Assessment

Before anything else, every firm must determine whether its AI use has a material impact on surveying service delivery [1]. This is not a tick-box exercise — it requires a written record documenting:

- Which AI tools are in use (report drafting assistants, drone analysis software, defect detection apps, etc.)

- The specific tasks each tool performs

- Whether those tasks directly influence the professional output delivered to clients

- The reasoning behind the materiality determination [1]

If the answer is yes — AI use is material — the full suite of compliance obligations applies. If the answer is no, firms must still document that determination and revisit it whenever new tools are adopted.

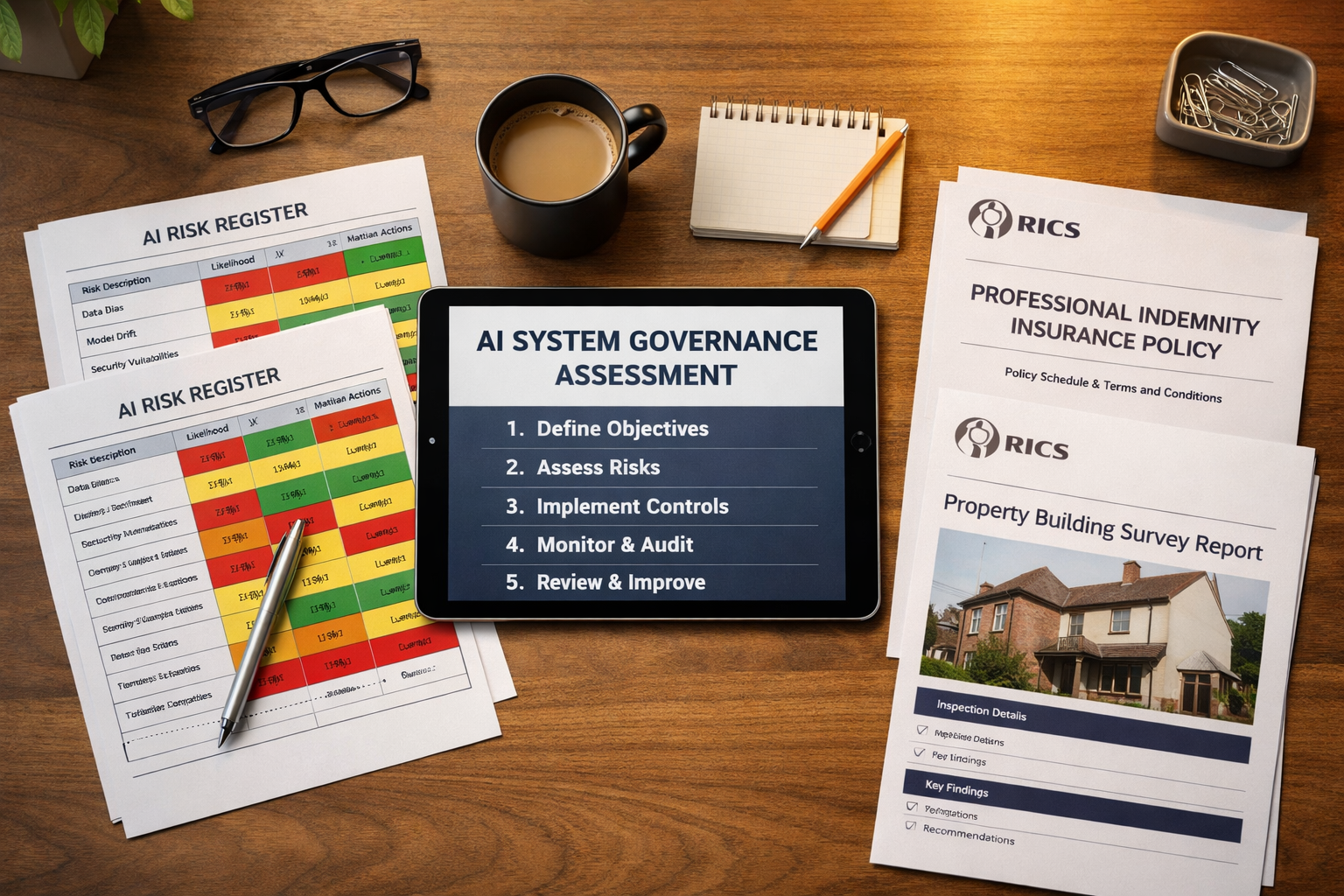

✅ Step 2: Complete the System Governance Assessment

For every AI system deployed, firms must complete a mandatory written system governance assessment covering [5]:

| Assessment Criterion | What to Document |

|---|---|

| Nature of services delivered | How AI integrates into the survey process |

| Task characteristics | Repetitive vs. complex, high-stakes vs. low-stakes |

| Alternative tools available | Non-AI alternatives considered |

| Environmental impact | Energy consumption, carbon footprint of AI use |

| Stakeholder impact | Effect on clients, third parties, the profession |

| Potential bias | Training data limitations, demographic skews |

| Error risk | Likelihood and consequence of incorrect outputs |

This assessment must be completed before deployment, not retrospectively [5].

✅ Step 3: Establish and Maintain an AI Risk Register

Any firm using AI with material impact must create and maintain a formal risk register [4]. This is one of the most operationally significant requirements in the standard.

The risk register must document:

- Inherent bias in AI outputs

- Erroneous outputs and their potential consequences

- Data governance risks (including how client data is handled)

- System reliability issues

- Accountability gaps — who is responsible when things go wrong [4]

Each risk entry must include a Red, Amber, Green (RAG) rating or equivalent, alongside likelihood and impact assessments, mitigation plans, and a firm risk appetite statement [4].

🔴 Critical requirement: Risk registers must be reviewed and updated at least quarterly by designated staff [4]. Ad hoc or annual reviews do not meet the standard.

When choosing a surveyor, clients increasingly ask about AI governance practices. Understanding what to do before an RICS home survey now includes asking whether the firm has a compliant risk register in place.

✅ Step 4: Conduct Written Due Diligence Before Procuring AI Systems

Before adopting any new AI tool, firms must conduct and record a written due diligence process that includes [4]:

- Practical testing of the system for fitness-for-purpose

- Review of supplier documentation and data governance policies

- Assessment of what happens when suppliers provide limited transparency

- Documentation of any residual risks in the risk register [4]

Where suppliers are opaque about how their systems work — a common issue with proprietary AI platforms — firms must identify and document those associated risks rather than simply proceeding on trust [4].

✅ Step 5: Issue Written Client Notifications

This is perhaps the most immediately visible compliance requirement. Firms must notify clients in writing and in advance whenever AI will be used in service delivery [2][4]. The notification must specify:

- The purpose of AI use in the survey process

- Whether clients have the option to opt out

- How AI outputs are overseen by qualified professionals [4]

This notification must be embedded in terms of engagement, contractual documents, and service agreements — not buried in small print or delivered verbally [4].

For clients navigating decisions such as choosing between a homebuyers report or building survey, this transparency requirement means they will now receive clearer information about the tools being used to assess their potential property.

✅ Step 6: Apply and Document Professional Judgement

The standard is unambiguous: AI outputs do not replace professional judgement [2]. Firms must:

- Apply professional judgement when assessing any AI output that has material impact

- Maintain sufficient knowledge of the AI systems in use to support responsible oversight

- Provide written information on request detailing the AI system type, working methods, limitations, due diligence processes, and reliability decisions [4]

This requirement is particularly relevant in building surveys, where AI tools might flag potential structural defects or damp issues. The surveyor must be capable of critically evaluating those outputs — not simply relaying them to clients.

✅ Step 7: Review Professional Indemnity Insurance

Firms must review their professional indemnity insurance (PII) policies to ensure adequate coverage for AI-related risks [5]. This is now an explicit compliance checklist item.

Questions to raise with insurers include:

- Does the policy cover claims arising from AI-assisted outputs?

- Are there exclusions for automated or machine-generated content?

- Does coverage extend to third-party AI tools used in service delivery?

Failure to address PII gaps could leave firms exposed in the event of a claim related to an AI error — even if the firm otherwise complied with the standard [5].

✅ Step 8: Implement Data Governance and Anonymisation Protocols

Responsible AI use policies must cover data governance, including [2][5]:

- Anonymisation protocols for client data used in or processed by AI systems

- System reliability standards and monitoring procedures

- Clear accountability frameworks identifying who is responsible for AI oversight

For building surveyors, this means ensuring that property data, client information, and survey findings processed through AI tools are handled in compliance with both the RICS standard and applicable data protection legislation.

✅ Step 9: Address Legislative Conflicts

Where applicable legislation conflicts with the RICS standard, legislation takes precedence [4]. However, firms cannot simply ignore the conflict. They must:

- Document the specific conflict in writing

- Formally report it to RICS [4]

This ensures regulatory transparency and contributes to the evolution of the standard over time.

Practical Guidance for Building Surveyors Using AI Tools in 2026

Common AI Applications in Building Surveys

Building surveyors are increasingly using AI in the following areas:

- 🏠 Automated defect detection via image recognition software

- 📊 Report drafting assistance using large language models

- 🚁 Drone-based roof and facade analysis with AI interpretation

- 📍 Thermal imaging analysis for damp and insulation assessment

- 📋 Comparable data analysis for condition ratings

Each of these applications must be assessed under the governance framework. The level of oversight required scales with the materiality and risk of the specific task.

Proportionality and Small Practices

The standard acknowledges that a sole practitioner cannot implement governance at the same scale as a large firm. However, proportionality does not mean exemption [2]. Small practices must still complete all mandatory steps — the documentation may simply be less complex.

A sole trader using an AI report-drafting tool must still:

- Assess materiality

- Maintain a risk register

- Notify clients in writing

- Apply professional judgement to all outputs

For surveyors working across London and the South East, including those providing property surveys in Kensington or Chelsea, client expectations around transparency and professionalism are particularly high — making early compliance a competitive advantage as much as a legal obligation.

Internal AI Development

Firms developing proprietary AI systems face additional obligations [4]. Before deployment, they must record:

- The scope of the AI application

- Potential risks and benefits

- Alternative approaches considered

- Sustainability impact assessments [4]

This applies to firms building bespoke survey tools, custom reporting systems, or integrated data platforms.

RICS Responsible AI Standards in Building Surveys: March 2026 Implementation Checklist — Quick Reference Summary

| # | Requirement | Mandatory? | Frequency |

|---|---|---|---|

| 1 | Written material impact assessment | ✅ Yes | On adoption of each AI tool |

| 2 | System governance assessment | ✅ Yes | Before deployment |

| 3 | AI risk register (RAG rated) | ✅ Yes (if material) | Quarterly review minimum |

| 4 | Written due diligence on AI procurement | ✅ Yes | Before procurement |

| 5 | Written client notification | ✅ Yes | Per engagement |

| 6 | Professional judgement documentation | ✅ Yes | Per AI-assisted output |

| 7 | PII policy review | ✅ Yes | Annually / on policy renewal |

| 8 | Data governance & anonymisation policy | ✅ Yes | Ongoing |

| 9 | Legislative conflict reporting | ✅ Yes (if applicable) | As conflicts arise |

What Happens If Firms Don't Comply?

Non-compliance with a mandatory RICS professional standard exposes firms and individual members to:

- RICS disciplinary proceedings

- Reputational damage and loss of regulated status

- Professional indemnity claims from clients harmed by unmanaged AI risks

- Regulatory scrutiny under broader UK AI and data protection frameworks

The standard is clear that ignorance of its requirements is not a defence [2][5]. Firms that have not yet completed their implementation should treat this as an urgent priority.

Clients who receive a poor survey outcome and later discover AI was used without proper disclosure or oversight may have grounds for complaint — particularly if what to do after a bad building survey report leads them to investigate the methodology used.

Conclusion: Turning Compliance Into Competitive Advantage

The RICS Responsible AI Standards in Building Surveys: March 2026 Implementation Checklist for UK Surveyors is not a bureaucratic burden — it is a framework for building client trust in an era of rapid technological change. Surveyors who implement it thoroughly will be better positioned to defend their professional judgements, attract quality-conscious clients, and adapt as AI tools continue to evolve.

Actionable Next Steps for UK Building Surveyors 🎯

- Audit your current AI tool usage — list every tool and assess materiality this week.

- Draft or update your risk register — use the RAG framework and assign a named owner.

- Update your terms of engagement — embed the client notification requirement immediately.

- Contact your PII insurer — request written confirmation of AI coverage.

- Schedule quarterly risk register reviews — put them in the diary now.

- Train your team — ensure all staff understand the standard's requirements and their individual responsibilities.

The profession's credibility depends on surveyors being the experts who oversee AI — not the practitioners who are overseen by it. Compliance with the March 2026 standard is the foundation of that professional identity.

For expert building survey services delivered with full RICS compliance, explore our full range of property survey options or get a quote today.

References

[1] AI Responsible Use Standard – https://ww3.rics.org/uk/en/journals/construction-journal/ai-responsible-use-standard.html

[2] RICS Brings First Global AI Standard For Surveyors Into Effect – https://www.associationexecutives.org/resource/rics-brings-first-global-ai-standard-for-surveyors-into-effect.html

[3] Responsible Use Of AI – https://www.rics.org/profession-standards/rics-standards-and-guidance/conduct-competence/responsible-use-of-ai

[4] Responsible Use Of Artificial Intelligence In Surveying Practice September 2025 – https://www.rics.org/content/dam/ricsglobal/documents/standards/Responsible-use-of-artificial-intelligence-in-surveying-practice_September-2025.pdf

[5] RICS Responsible Use Of AI Explained For APC Candidates – https://resources.apcguide.com/rics-responsible-use-of-ai-explained-for-apc-candidates/

[6] New AI Guidance: Five Things Every QS Needs To Do Now – https://www.building.co.uk/comment/new-ai-guidance-five-things-every-qs-needs-to-do-now/5141082.article