Party wall disputes cost UK property owners an estimated £120 million annually in legal fees and surveyor appointments, with approximately 35% of these conflicts arising from preventable administrative errors and invalid notices. The Royal Institution of Chartered Surveyors (RICS) has responded to this challenge by introducing the first-ever mandatory standard for Responsible AI Use in Party Wall Surveys: RICS 2026 Standards for Dispute Prediction and Award Drafting, which became enforceable on 9 March 2026 for all RICS members and regulated firms[1][2].

This groundbreaking regulation transforms how expert party wall surveyors approach risk assessment, notice validation, and award preparation. By establishing clear protocols for artificial intelligence deployment, the standard balances technological efficiency with professional judgement—ensuring that automation enhances rather than replaces the critical human oversight required in party wall agreements.

Key Takeaways

- Mandatory compliance: The RICS AI standard became compulsory on 9 March 2026 for all members when AI carries material impact on surveying services[1][2]

- Four core pillars: Governance and risk management, professional judgement and oversight, transparency and client communication, and responsible AI development[2]

- Risk registers required: Firms must maintain centralized documentation of AI-related risks, regularly reviewed and updated[1]

- Human oversight essential: AI tools must never operate without qualified surveyor supervision, particularly in dispute prediction and award drafting

- Explainability mandatory: Clients have the right to request detailed information about AI systems, limitations, and reliability decisions[1][3]

Understanding the RICS 2026 AI Standards Framework

The Responsible AI Use in Party Wall Surveys: RICS 2026 Standards for Dispute Prediction and Award Drafting establishes a comprehensive governance framework that addresses the unique challenges of applying artificial intelligence to party wall matters. Unlike general AI guidelines, these standards specifically account for the legal implications of the Party Wall etc. Act 1996 and the potential consequences of automated errors.

The Four Core Requirement Areas

The RICS framework divides responsible AI implementation into four distinct pillars, each with specific obligations for surveying firms[2]:

1. Governance and Risk Management 📋

This pillar requires firms to establish formal oversight structures before deploying AI tools. System governance assessments must evaluate:

- The specific surveying services where AI will be applied

- The nature of tasks being automated or augmented

- Available alternative tools and methodologies

- Environmental impact of AI system operation

- Potential stakeholder impacts (building owners, adjoining owners, contractors)

- Bias assessment in training data and algorithmic outputs

- Error risk profiles and failure scenarios[1]

2. Professional Judgement and Oversight 👨💼

Human expertise remains paramount under the new standards. Qualified surveyors must:

- Maintain final decision-making authority on all material outputs

- Review AI-generated risk assessments before client communication

- Validate automated notice compliance checks against current legislation

- Exercise professional scepticism regarding AI recommendations

- Document instances where professional judgement overrides AI suggestions

3. Transparency and Client Communication 💬

Clients engaging party wall surveyors have explicit rights under the 2026 standards. Firms must provide written information upon request detailing:

- AI system types deployed in their case

- Known limitations and accuracy boundaries

- Due diligence processes for AI tool selection

- Risk management protocols specific to their matter

- How reliability decisions are made regarding AI outputs[1][3]

4. Responsible Development of AI 🔧

For firms developing proprietary AI tools, additional obligations apply regarding ethical development practices, data protection compliance, and ongoing performance monitoring.

Implementing Responsible AI Use in Party Wall Surveys for Dispute Prediction

Dispute prediction represents one of the most promising applications of AI in party wall work. Machine learning algorithms can analyze historical case data, notice patterns, and property characteristics to identify high-risk scenarios before conflicts escalate. However, the Responsible AI Use in Party Wall Surveys: RICS 2026 Standards for Dispute Prediction and Award Drafting imposes strict controls on these predictive systems.

Mandatory Risk Register Requirements

Every firm using AI for dispute prediction must maintain a comprehensive risk register that centralizes knowledge about potential failure modes[1]. This register should document:

| Risk Category | Assessment Requirements | Review Frequency |

|---|---|---|

| False Positives | Percentage of incorrectly flagged disputes | Monthly |

| False Negatives | Missed high-risk situations | Monthly |

| Bias Detection | Demographic or property-type skews | Quarterly |

| Data Quality | Training data completeness and accuracy | Quarterly |

| Regulatory Drift | Alignment with current Party Wall Act interpretations | Annually |

The risk register serves multiple purposes: it demonstrates compliance with RICS standards, supports professional indemnity insurance claims, and creates an audit trail for legal disputes where AI recommendations are challenged.

System Governance Assessment Process

Before implementing any AI-powered dispute prediction tool, firms must complete a thorough governance assessment[1]. This evaluation should answer critical questions:

Service Evaluation Questions:

- Which specific party wall services will use this AI system?

- Does the tool analyze notice validity, predict owner responses, or forecast dispute likelihood?

- What percentage of cases will rely on AI-generated risk scores?

Alternative Analysis:

- What non-AI methods currently serve this function?

- How does AI performance compare to experienced surveyor judgement?

- What backup procedures exist if the AI system fails?

Stakeholder Impact Assessment:

- Could AI errors lead to invalid notices being issued?

- Might automated risk scoring disadvantage certain property types?

- How will building owners and adjoining owners be informed of AI involvement?

Bias and Error Risk Evaluation:

- Was the AI trained on diverse property types and geographic areas?

- Does historical data reflect current market conditions and legal interpretations?

- What error rates are acceptable for different prediction types?

Preventing Invalid Notices Through AI Validation

One of the most valuable applications of Responsible AI Use in Party Wall Surveys: RICS 2026 Standards for Dispute Prediction and Award Drafting involves automated validation of party wall notices before service. AI systems can check:

✅ Statutory compliance: Ensuring notices contain all required information under sections 1, 2, or 6 of the Party Wall etc. Act 1996

✅ Timing requirements: Verifying appropriate notice periods (typically 2 months for most works)

✅ Description accuracy: Confirming work descriptions are sufficiently detailed and unambiguous

✅ Recipient identification: Validating that all adjoining owners have been correctly identified and addressed

However, the RICS standards mandate that no notice should be served based solely on AI validation[3]. A qualified surveyor must review and approve every notice, applying professional judgement to nuances that algorithms may miss—such as the interpretation of "special foundations" or the practical impact of proposed works.

Responsible Use Policies

To prevent unsupervised AI deployment, firms must establish written responsible use policies that[1]:

- Clarify accountability: Specify which individuals are responsible for AI outputs

- Reinforce human oversight: Define mandatory review checkpoints

- Protect client confidentiality: Ensure AI systems comply with GDPR and data protection requirements

- Support regulatory compliance: Align AI use with RICS professional standards and Party Wall Act obligations

- Establish escalation procedures: Define when AI recommendations should be overridden

These policies should be reviewed annually and updated whenever new AI tools are adopted or regulatory requirements change.

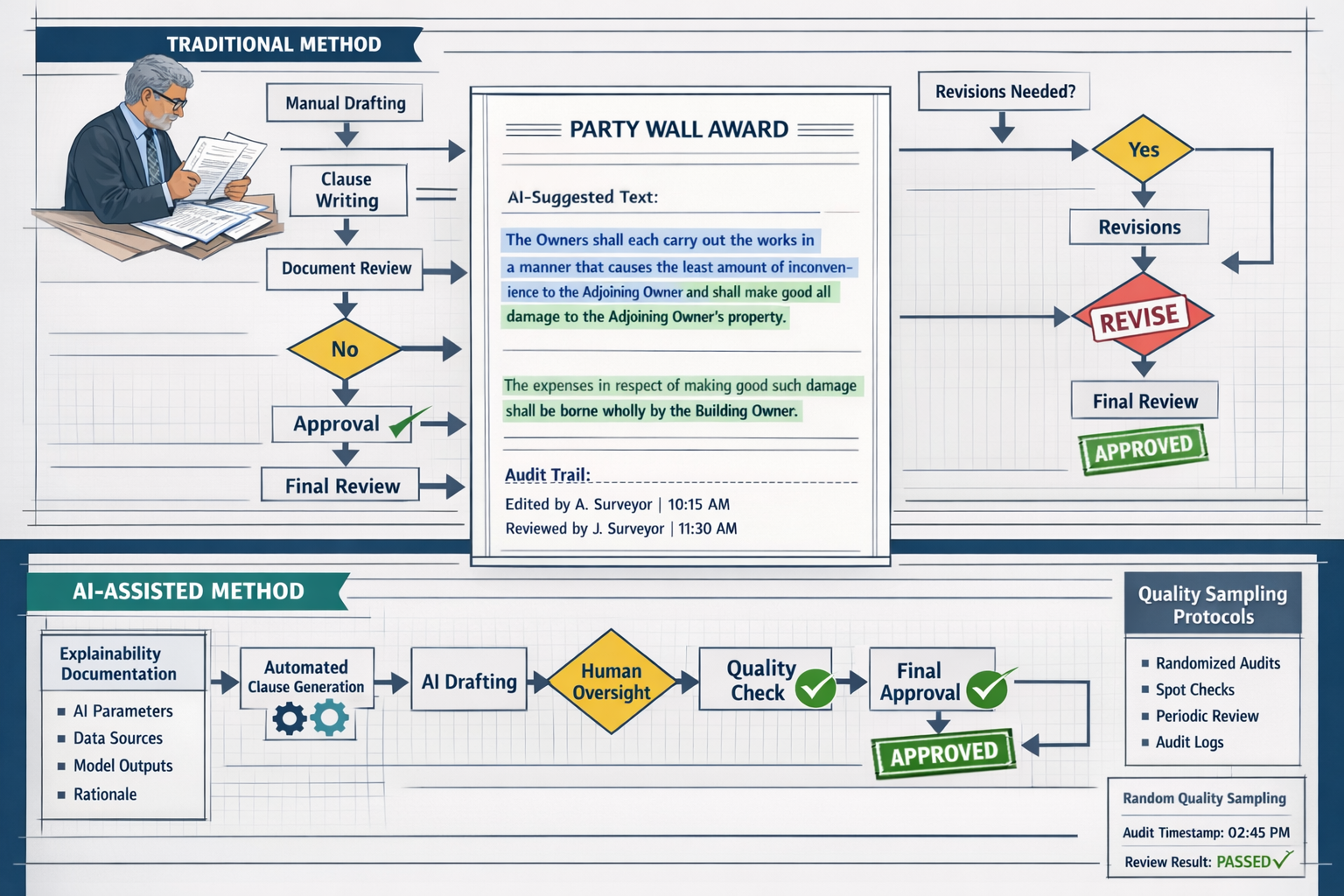

Award Drafting Under the RICS 2026 AI Standards

Party wall awards represent legally binding documents that determine rights, responsibilities, and remedies for all parties. The precision required in award drafting makes this area particularly sensitive for AI application, and the Responsible AI Use in Party Wall Surveys: RICS 2026 Standards for Dispute Prediction and Award Drafting establishes rigorous safeguards.

Explainability Documentation Requirements

When AI assists with award drafting—whether through clause suggestion, precedent matching, or automated condition scheduling—firms must create comprehensive explainability documentation[1][3]. This audit trail should include:

Pre-Implementation Documentation:

- AI system selection criteria and evaluation process

- Training data sources and quality assessment

- Validation testing results against known award outcomes

- Identified limitations and known failure modes

Operational Documentation:

- Specific AI contributions to each award section

- Human modifications to AI-generated content

- Reasoning for accepting or rejecting AI suggestions

- Quality assurance checks performed

Client-Facing Documentation:

- Plain language explanation of AI involvement

- Limitations disclosure appropriate to client sophistication

- Assurance of surveyor oversight and final responsibility

- Contact information for questions about AI use

This documentation must be retained for the same period as other surveying records (typically 6-15 years depending on jurisdiction) and made available to clients upon request.

Randomized Quality Assurance Protocols

The RICS standards require regular dip sampling of AI-assisted outputs to maintain quality standards[3]. For award drafting, this means:

Monthly Sampling Requirements:

- Random selection of 10-15% of AI-assisted awards

- Independent review by qualified surveyor not involved in original drafting

- Comparison against manual drafting quality benchmarks

- Documentation of discrepancies and error patterns

Quality Metrics to Track:

- Clause accuracy and legal validity

- Completeness of condition descriptions

- Appropriateness of access provisions

- Clarity of dispute resolution procedures

- Consistency with Party Wall Act requirements

When quality issues are identified, firms must investigate root causes, retrain AI systems if necessary, and notify affected clients if material errors occurred.

Balancing Efficiency and Professional Responsibility

AI can significantly accelerate award drafting by:

- Suggesting standard clauses based on work type

- Generating schedule of condition templates

- Identifying relevant precedents from previous awards

- Highlighting potential ambiguities or inconsistencies

However, the RICS standards emphasize that efficiency must never compromise professional responsibility[4]. Surveyors remain personally liable for award content regardless of AI involvement. This means:

⚠️ Never accept AI-generated clauses without understanding their legal implications

⚠️ Always customize standard language to reflect specific property circumstances

⚠️ Verify that AI-suggested precedents remain current with case law developments

⚠️ Ensure award language is accessible to non-technical parties

Integration with Traditional Surveying Practice

The most successful implementations of Responsible AI Use in Party Wall Surveys: RICS 2026 Standards for Dispute Prediction and Award Drafting treat AI as an augmentation tool rather than a replacement for traditional surveying skills. Best practices include:

Hybrid Workflows:

- AI performs initial risk assessment and notice validation

- Surveyor reviews and refines AI outputs

- Site inspection and condition assessment conducted traditionally

- AI suggests award structure and standard clauses

- Surveyor customizes and finalizes award content

- Independent quality review before service

This approach leverages AI efficiency while maintaining the professional judgement that distinguishes qualified surveyors from automated systems.

Compliance Strategies for Surveying Firms

Achieving compliance with the Responsible AI Use in Party Wall Surveys: RICS 2026 Standards for Dispute Prediction and Award Drafting requires systematic implementation across firm operations. The following strategies support effective compliance:

Establishing Governance Structures

Designate an AI Compliance Officer responsible for:

- Monitoring regulatory developments

- Maintaining risk registers and documentation

- Coordinating quality assurance activities

- Liaising with RICS on compliance questions

- Training staff on responsible AI use

Create an AI Review Committee that:

- Evaluates new AI tools before adoption

- Reviews quality assurance findings quarterly

- Updates responsible use policies

- Investigates AI-related complaints or errors

- Approves changes to AI deployment

Staff Training and Competency Development

All surveyors using AI tools must receive training covering:

📚 Technical Understanding: How AI systems generate predictions and recommendations

📚 Limitation Awareness: Common failure modes and accuracy boundaries

📚 Professional Responsibility: Surveyor liability regardless of AI involvement

📚 Quality Assurance: How to effectively review AI outputs

📚 Client Communication: Explaining AI use in accessible language

Training should be refreshed annually and documented for professional development records.

Technology Selection Criteria

When evaluating AI tools for party wall work, firms should assess:

Vendor Compliance:

- Does the vendor understand RICS 2026 AI standards?

- Can they provide explainability documentation?

- What quality assurance support do they offer?

- How do they handle data protection and confidentiality?

System Performance:

- What validation testing has been conducted?

- What error rates are documented?

- How does performance vary across property types?

- Can the system explain its recommendations?

Integration Capabilities:

- Does it integrate with existing case management systems?

- Can outputs be easily reviewed and modified?

- Does it support audit trail requirements?

- What backup procedures exist if the system fails?

Firms should document their selection process as part of governance assessment requirements[1].

Client Communication Templates

To meet transparency obligations, firms should develop standard templates explaining AI use:

Initial Engagement Letter Addition:

"Our firm may use artificial intelligence tools to assist with risk assessment, notice validation, and award drafting in your matter. All AI outputs are reviewed and approved by qualified surveyors before use. You have the right to request detailed information about our AI systems, their limitations, and how they are applied to your case. AI use does not diminish our professional responsibility for the services we provide."

AI Disclosure Statement:

"This [notice/award/report] was prepared with assistance from AI technology that [specific function]. The AI system has [accuracy rate/limitation]. All content has been reviewed and approved by [surveyor name], who takes full professional responsibility for its accuracy and appropriateness."

These templates should be reviewed by legal counsel to ensure they provide adequate disclosure without creating unnecessary liability exposure.

Future Developments and Industry Impact

The introduction of Responsible AI Use in Party Wall Surveys: RICS 2026 Standards for Dispute Prediction and Award Drafting represents the beginning of a broader transformation in surveying practice. Several trends are likely to emerge:

Increased Standardization

As AI systems analyze thousands of party wall cases, patterns will emerge that support greater standardization of:

- Notice templates optimized for clarity and compliance

- Award structures tailored to specific work types

- Risk assessment frameworks validated across diverse properties

- Condition survey methodologies

This standardization may reduce disputes arising from ambiguous language or incomplete documentation.

Enhanced Predictive Capabilities

Machine learning models will become increasingly sophisticated at identifying dispute risk factors, potentially including:

- Property ownership patterns associated with conflict

- Contractor selection and its correlation with compliance issues

- Seasonal variations in dispute likelihood

- Geographic factors influencing owner responses

These insights may enable proactive dispute prevention strategies.

Integration with Building Information Modeling (BIM)

AI systems may increasingly integrate with BIM data to:

- Automatically identify party wall implications of proposed works

- Generate 3D visualizations for owner communication

- Predict structural impacts with greater precision

- Optimize work sequencing to minimize disruption

This integration could significantly enhance the technical accuracy of party wall assessments.

Regulatory Evolution

The RICS standards will likely evolve based on implementation experience. Potential future developments include:

- More specific accuracy thresholds for different AI applications

- Mandatory reporting of AI-related errors or failures

- Certification programs for AI tools used in surveying

- Enhanced guidance on bias detection and mitigation

Firms should monitor RICS communications for updates and participate in consultation processes.

Professional Differentiation

As AI handles routine tasks, surveyor value will increasingly derive from:

- Complex judgement in unusual situations

- Effective client communication and relationship management

- Creative problem-solving for challenging party wall scenarios

- Ethical decision-making when AI recommendations are questionable

Surveyors who successfully combine AI efficiency with enhanced human expertise will gain competitive advantage.

Practical Implementation Checklist

For firms beginning their compliance journey with Responsible AI Use in Party Wall Surveys: RICS 2026 Standards for Dispute Prediction and Award Drafting, the following checklist provides a structured approach:

Phase 1: Assessment (Weeks 1-4)

✅ Inventory all current AI tools and systems in use

✅ Identify surveying services where AI has material impact

✅ Review existing governance structures and documentation

✅ Assess current compliance gaps against RICS standards

✅ Estimate resources required for full compliance

Phase 2: Policy Development (Weeks 5-8)

✅ Draft responsible use policies for AI deployment

✅ Create risk register template and initial entries

✅ Develop system governance assessment procedures

✅ Prepare client communication templates

✅ Establish quality assurance sampling protocols

Phase 3: Implementation (Weeks 9-16)

✅ Designate AI compliance officer and review committee

✅ Conduct staff training on new policies and procedures

✅ Implement documentation systems for explainability requirements

✅ Begin randomized quality assurance sampling

✅ Update engagement letters and client communications

Phase 4: Monitoring and Refinement (Ongoing)

✅ Monthly risk register reviews and updates

✅ Quarterly quality assurance analysis

✅ Annual policy review and staff retraining

✅ Continuous monitoring of RICS guidance updates

✅ Regular assessment of AI tool performance

This phased approach allows firms to achieve compliance systematically while maintaining service delivery continuity.

Conclusion

The Responsible AI Use in Party Wall Surveys: RICS 2026 Standards for Dispute Prediction and Award Drafting represents a watershed moment for the surveying profession. By establishing clear governance frameworks, mandatory oversight requirements, and transparency obligations, RICS has created a pathway for responsible AI adoption that protects clients while enabling technological innovation.

The standards recognize that AI offers genuine value in party wall work—from validating notice compliance to predicting dispute risks and accelerating award preparation. However, they also acknowledge that party wall matters carry significant legal and financial consequences that demand human professional judgement as the ultimate safeguard.

For surveying firms, compliance requires investment in governance structures, staff training, and quality assurance processes. Yet this investment yields substantial returns: reduced error rates, enhanced efficiency, improved client satisfaction, and competitive differentiation in an increasingly technology-driven market.

The most successful firms will be those that view the RICS AI standards not as a compliance burden but as a framework for excellence—an opportunity to combine cutting-edge technology with the professional expertise that has always defined quality surveying practice.

Next Steps for Surveyors

If you're a party wall surveyor looking to implement responsible AI use:

- Conduct a compliance audit using the checklist provided above

- Engage with technology vendors about their RICS standard alignment

- Develop firm-specific policies tailored to your practice areas

- Invest in staff training to build AI literacy and critical evaluation skills

- Join professional discussions about AI implementation best practices

- Monitor RICS guidance for updates and clarifications

If you're a property owner or developer:

- Ask your surveyor about their AI use and compliance with RICS standards

- Request explainability documentation to understand how AI influenced your matter

- Ensure human oversight by confirming qualified surveyor review of all outputs

- Understand your rights regarding transparency and information access

The integration of AI into party wall surveying is inevitable and, when implemented responsibly, beneficial for all stakeholders. The RICS 2026 standards provide the roadmap for this transformation—balancing innovation with the professional responsibility that remains the foundation of surveying practice.

For expert guidance on party wall matters conducted in full compliance with the latest RICS standards, including responsible AI use, explore our comprehensive RICS surveys and specialized party wall services. Our team combines advanced technology with decades of professional experience to deliver accurate, reliable, and compliant surveying services across London and surrounding areas.

References

[1] Rics Responsible Use Of Ai Explained For Apc Candidates – https://resources.apcguide.com/rics-responsible-use-of-ai-explained-for-apc-candidates/

[2] Rics First Ever Standard On Responsible Ai Use Now In Effect – https://www.rics.org/news-insights/rics-first-ever-standard-on-responsible-ai-use-now-in-effect

[3] Responsible Use Of Artificial Intelligence In Surveying Practice September 2025 – https://www.rics.org/content/dam/ricsglobal/documents/standards/Responsible-use-of-artificial-intelligence-in-surveying-practice_September-2025.pdf

[4] Ai Responsible Use Standard – https://ww3.rics.org/uk/en/journals/construction-journal/ai-responsible-use-standard.html